Voice control is the successor of touch screen interface used in products like smartphones, tablets, home automation, in-vehicle control, vending machines, kiosk and Human Machine Interface (HMI). It is the next big thing of user Interface (UI), especially for artificial intelligence enabled IoT devices, ranging from smart home products to industrial control panels. According to ABI Research, “last year, 141 million voice control smart home device shipped worldwide.” In 2020, “voice control device shipments will grow globally by close to 30% over 2019.”

Since the original Echo device was brought to market in 2014, Amazon’s Alexa Voice Service (AVS) is becoming one of the most popular voice recognition and natural language understanding (NLU) artificial intelligent services for building connected voice control IoT devices. These devices are defined as Alexa built-in products. They can be built with industrial computers, panel PCs, tablet computers or other embedded systems with microphones, speaker and Internet connection. With these products, you can initiate with your voice commands, get responses from Alexa, connect to the cloud and control peripherals with your voice.

Besides embedded control system layers like hardware, operating system and application software, the design of a Alexa built-in product also involves voice front end, Wake Word Engine (WWE), Alexa Voice Service (AVS) cloud services, AVS Device SDK integration, extra communication and control interfaces.

Selecting a Voice Front End

Voice front end is the forefront of a voice control device which mandates high-accuracy of picking up human voice in a noisy environment. To ensure wake-word triggering and provide clear voice commands for interpretation, a typical voice front end consists of software or hardware DSPs to implement technologies like Acoustic Echo Cancellation (AEC), Beamforming, noise suppression and Wake Word Engine (WWE).

Loud music or speech playing back by the device is picked up by its microphones. Acoustic Echo Cancellation (AEC) subtracts the playback noise and allows the microphones to pick up voice commands. Beamforming uses multiple microphones to locate the source of speech and attenuates all other background noises. Noise suppression removes background noise to improve voice recognition. The Wake Word Engine WWE listens for the keyword (like “Alexa”) before taking action to send the following utterance for speech recognition and understanding.

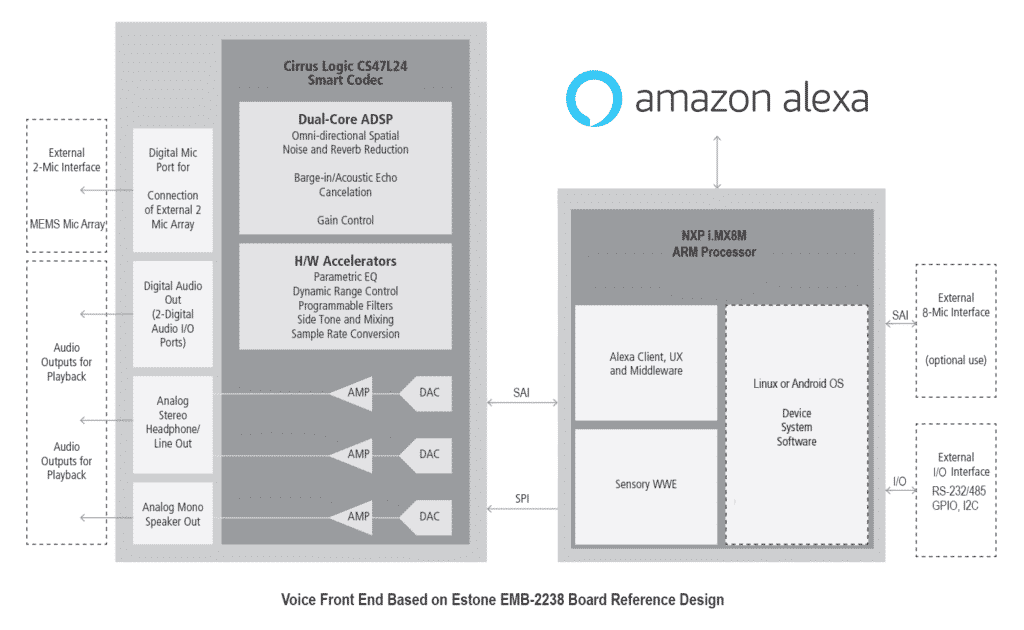

Estone’s EMB-2238 reference design is one of the examples of voice front end solution. It has built-in Amazon-qualified hardware DSP smart codec for AEC, noise suppression, high performance digital MEMS mic array for omni-directional spatial capture and support of Sensor’s TrulyHandsfre wake word engine tuned to “Alexa”.

Embedding Alexa Voice Service (AVS)

Alexa Voice Service (AVS) is a cloud-based service that allows device developers to integrate Alexa features and functions into a connected voice enabled product. It provides access to complex speech recognition, natural language understanding, Alexa skills and capabilities in the cloud for Alexa built-in products.

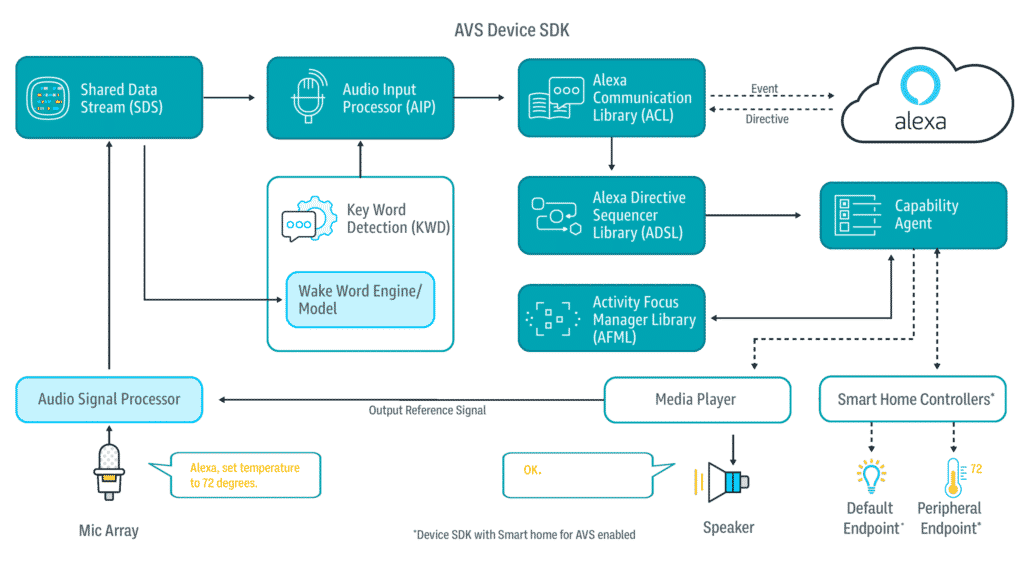

After selecting the voice front end with sufficient memory, processing power and a wake word-enabled microphone array, the next step for the developers is the integration of Alexa Voice Service Device SDK. The AVS Device SDK consists of C++ based libraries to communicate with the cloud-based Alexa Voice Service. It also exposes AVS APIs for device application customizations.

Let’s say that when a user asks “Alexa, turn on the AC”. The Wake Word Engine detects the keyword “Alexa” and the device’s AVS API sends the audio as a sound clip (event) to Alexa Voice Service in the cloud. AVS validates the wake-word and uses automatic speech recognition (ASR) to turn the voice command into text. Then, the natural-language understanding (NLU) process interprets what the user wants and routes it to the appropriate skill and action defined in the cloud. AVS then sends the voice of Alexa via text-to-speech engine or action messages back to the device AVS interface as directives.

AVS Device SDK runs on Linux, Android, Windows and macOS. This video demonstrates the Alexa Voice Service integrated into a Yocto Linux based EMB-2238 voice control reference design.

Building Skill-free Alexa Voice Control

More and more developers are building IoT devices using voice to control their unique hardware or custom peripherals. These products are not just as simple as controlling single-function smart light bulbs or smart plugs. For example, a recreational vehicle (RV) control panel controls HAVC, lighting, exhaust fans, generator, awning and many more. The peripherals being controlled are often connected via CAN bus, serial ports like RS-232/485, general-purpose input/output (GPIO) or wireless communication like WiFi, Zigbee, Z-Wave, etc.

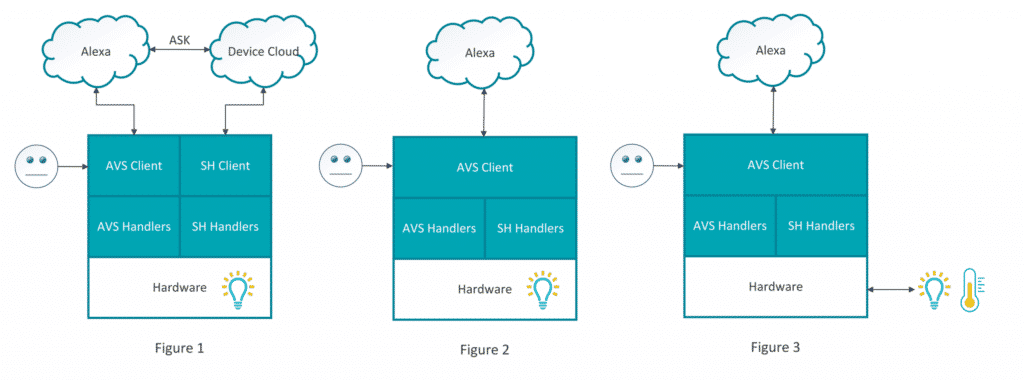

The standard way for the developers to add Alexa voice control into their products is to build separate custom Alexa Skills for their devices and maintain them in a separate cloud. That device maker’s cloud is then used to interface to Alexa Voice Service cloud and the connected device with peripherals being controlled (Figure 1).

However, in many cases, the products being developed may only have a few simple control interface type like the following:

- Power – control the on and off a connected peripheral, such as turning on/off the exhaust fan.

- Toggle – switch between two states, such as open or close the awning.

- Range – set the range of continuous values of a peripheral property, such as setting the temperature of the HAVC.

- Mode – set a set of values of a device’s operation mode, such as the theme of the ambient lighting.

These control interfaces are part of the Alexa Smart Home Skill API capability interfaces. With the latest AVS Device SDK, developers can enable these smart home capabilities and add skill-free custom voice control functionality for their AVS devices. Products running AVS client with Smart Home over AVS enabled can send and receive “Smart Home” events and directives for voice control with a single connection to the Alexa cloud (Figure 2, 3). This video is a demonstration of Estone’s 7” PPC-4707 POE Panel PC development kit built with skill-free Alexa voice control.

Voice control technology redefines the Internet of Things. Many companies are choosing to use the Alexa Voice Service (AVS) to add voice control capability to their products. Efforts are underway to simplify the development experience, like providing qualified voice front end reference designs, creating integrated AVS voice control development kits and the introduction of skill-free Smart Home for AVS Device SDK, helping developers to get their voice-enabled designs to market faster. Get your project started now.

To know more about Estone’s AVS voice control reference design products, see Embedded ARM Boards and Industrial Panel PCs on our website or contact us for details of your project.